Some people draft an email with ChatGPT in five minutes. Others spend longer than they would have without it. Some use Claude to structure a report and walk away with a strong outline. Others get a generic summary they have to rewrite from scratch. The tools are the same. The results are not.

This gap is not about access to better models, faster computers, or secret prompts. It comes down to a capability called AI fluency: the practical skill of working with AI systems effectively, efficiently, ethically, and safely. AI fluency is not the same as prompt engineering, and it is not the same as AI literacy. It covers the full arc of working with AI, from deciding whether to use it at all to taking responsibility for what it produces.

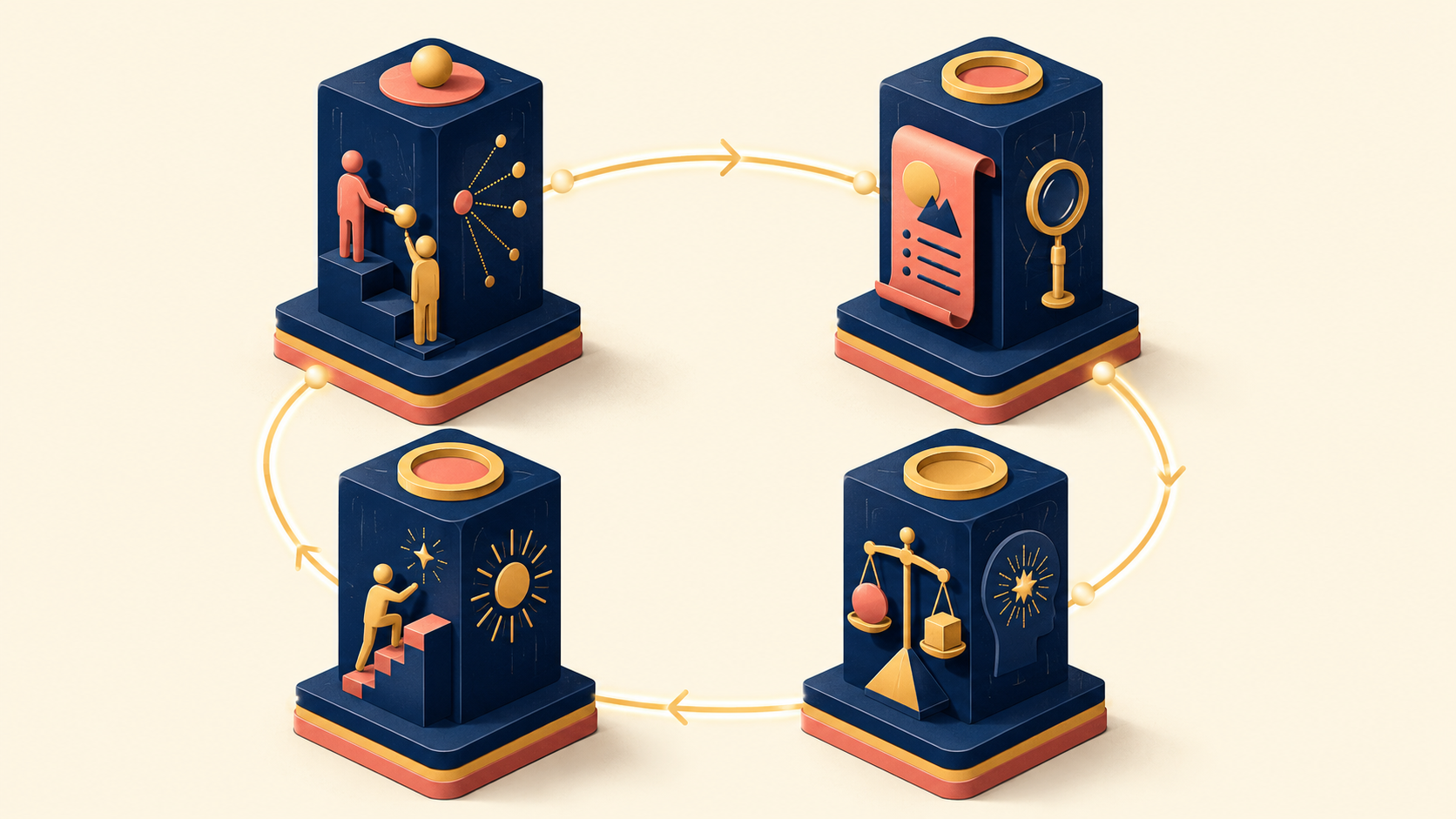

The clearest way to build this capability is the 4D Framework, developed by professors Rick Dakan and Joseph Feller in partnership with Anthropic. The 4Ds — Delegation, Description, Discernment, and Diligence — break AI fluency into four concrete competencies you can practice and improve. This guide walks through what each one means, how they connect, and how to apply them to real work.

What is AI Fluency?

AI fluency is the practical capability to interact with AI systems in a way that is effective, efficient, ethical, and safe. Each of these four qualities matters on its own:

- Effective: You actually get the output you wanted.

- Efficient: You reach that output without unnecessary trial and error.

- Ethical: You do not produce work that harms others or violates their rights.

- Safe: You manage the risks of leaked data, misinformation, or misuse.

The phrase “fluency” is borrowed from language learning for a reason. Speaking a language fluently is not the same as knowing its grammar. A fluent speaker reads cultural context, picks the right register for the situation, and repairs misunderstandings on the fly. Fluency is the full ability to communicate, not a checklist of rules.

AI fluency works the same way. Many people equate it with writing good prompts, and prompt engineering is part of it. But fluency goes further. It includes knowing when to call on AI in the first place, how to communicate with it, how to evaluate what it returns, and how to own the results. A fluent operator designs and manages the whole collaboration, not just the input box.

This matters because AI tools have become almost universal in knowledge work, while the skill gap in using them well has widened. The same prompt window produces dramatically different value depending on who is sitting in front of it. Fluency is what closes that gap.

Three Modes of Collaborating with AI: Automation, Augmentation, Agency

Working with AI is not a single activity. There are three distinct modes of collaboration, and each one calls for a different stance from the human in the loop.

| Mode | Human role | AI role | Human involvement | Example |

|---|---|---|---|---|

| Automation | Issues clear instructions | Executes assigned tasks | High | Sorting inbound emails, cleaning data |

| Augmentation | Thinks and decides alongside AI | Acts as a thought partner | Medium | Drafting strategy documents, code review |

| Agency | Designs principles and standards | Decides and acts independently | Low (concentrated upfront) | AI agents, automated workflows |

Automation

Automation is the most direct mode. You define the task, AI runs it. Consider an e-commerce team receiving 200 customer emails a day. Many of them follow predictable patterns — “When will my order arrive?” or “I want to return this item.” Configuring AI to classify these messages and draft first-pass replies is a textbook automation use case. Automation fits when the task is repetitive, the quality bar is well defined, and the volume is too high for a person to handle alone.

Augmentation

Augmentation is a step further. Here the human and AI work as thinking partners. You set a direction, AI extends it, you refine what AI offered, AI rebuilds based on your refinement. Imagine writing a business plan for a subscription lunch service aimed at office workers in their twenties. You ask AI to estimate market size, then correct the assumptions using your industry experience, then ask AI to recalculate with your inputs. The key difference from automation is that human judgment and AI capability take turns shaping the output.

Agency

Agency is the most autonomous mode. Instead of instructing AI on each task, you set the principles and standards that let it act on its own. For a social media management agent, you might specify: “Our brand voice is friendly but professional. Avoid mentioning competitors. Publish industry trend content three times a week. Respond to negative comments within 24 hours in an empathetic tone.” You are not handing over individual tasks — you are defining the rules of engagement.

None of these modes is inherently better. The right choice depends on the work in front of you, and fluent operators move between them deliberately.

The 4D Framework: Four Core Capabilities of AI Fluency

The 4D Framework breaks AI fluency into four competencies, each named after the first letter of its core word. Together they cover the full lifecycle of working with AI.

| Competency | English | Core question |

|---|---|---|

| Delegation | Delegation | “Is this the right task to hand off to AI?” |

| Description | Description | “How do I communicate what I want?” |

| Discernment | Discernment | “How good is the output AI returned?” |

| Diligence | Diligence | “What am I responsible for in this collaboration?” |

These four capabilities are not independent. They form a loop. Poor delegation means even excellent description leads to the wrong result. Skipping discernment means problems leak into the diligence stage. The next sections walk through each one in turn, and the final section shows how they connect in a real scenario.

┌─────────────────────────────────────────────────┐

│ The 4D Framework Flow │

│ │

│ Delegation │

│ "Should I hand this task to AI?" │

│ │ │

│ ▼ │

│ Description │

│ "How do I communicate what I want?" │

│ │ │

│ ▼ │

│ Discernment │

│ "Is the output good enough?" │

│ │ │

│ ┌─────┴─────┐ │

│ ▼ ▼ │

│ Satisfactory Lacking → Back to Description │

│ │ │

│ ▼ │

│ Diligence │

│ "I take responsibility for this output." │

│ │

└─────────────────────────────────────────────────┘Delegation: Deciding What to Hand Off to AI

Delegation is the capability to thoughtfully decide which tasks to do yourself, which to do with AI, and which to hand off entirely, and then allocate the work accordingly.

The key word is “thoughtfully.” Delegation is not the same as “just ask AI to do it.” It means understanding the nature of the work, judging whether AI can do it well, and then designing the right split. Delegation has three sub-capabilities.

Problem Awareness

Problem awareness is recognizing your own goal clearly before you involve AI. Many people skip this step and throw the task at AI immediately, which is like turning on the GPS before deciding where you are going. Even the best GPS is useless without a destination.

For example, sending AI a vague request like “Help me build a marketing strategy” produces a generic answer. But if you first articulate the problem yourself — “We sell a B2B SaaS product. Our main issue is customer churn. I want to focus on retention rather than acquisition. The budget is about $5,000 per month.” — AI can produce something far more useful. Problem awareness sharpens what you ask for.

Platform Awareness

Platform awareness is knowing what each AI system is good and bad at. AI is not one tool. Some models are strongest at text, some at images, some at code. Even within text generation, different models have different strengths.

| Criterion | What to check |

|---|---|

| Task fit | Is this AI suited to the kind of work I need (text, image, code)? |

| Accuracy | How much can I trust the output? Is this a domain where I need to verify facts? |

| Context handling | Can this AI understand and apply complex background information? |

| Security and privacy | Is it safe to put sensitive information into this AI? How is the data handled? |

Task Delegation

Task delegation is the actual allocation: deciding which parts go to AI and which stay with you. “Send everything to AI” and “do everything yourself” are rarely the best answers. Inside a single project, some pieces fit AI and others belong to a person.

Consider writing a job posting. The breakdown might look like this:

- Good for AI: Researching the standard structure of postings in your industry, drafting a first-pass job description for a similar role, polishing grammar and tone.

- Stays with the human: Reflecting your specific company culture, deciding the real qualifications based on team needs, finalizing sensitive details like salary and benefits.

Good delegation is closer to lineup design than to handoff. A soccer coach assigning positions weighs each player’s strengths, the opponent’s tactics, and the shape of the match. Delegation with AI works the same way: a strategic judgment about what the task really requires and how far AI can carry it.

Description: Communicating Intent Effectively (Prompt Engineering)

Description is the capability to communicate your intent to AI clearly enough to create a productive collaboration. This overlaps heavily with what most people call prompt engineering, but description is broader. It is not only about writing a good prompt for an output — it covers how you want AI to approach the work and how you want it to behave during the conversation. Description has three dimensions.

Product Description defines the output itself. Format, audience, length, style, tone. The same topic produces radically different results depending on how tightly you specify the deliverable:

[Underspecified]

"Tell me about sustainable energy."

[Well specified]

"Make a comparison table of the pros and cons of

solar and wind power. The audience is general

readers unfamiliar with the energy industry. Keep

each cell to 2–3 sentences."Useful elements to specify in product description:

- Format: Table, list, report, email?

- Length: A paragraph, a page, 3,000 words?

- Audience: Expert, beginner, internal team?

- Tone: Formal, conversational, academic?

Process Description guides how AI approaches the task, not just what it produces. The same end goal can be reached with very different quality depending on the path AI takes.

[Process described]

"Analyze 50 user reviews of our app. Approach it in this order:

Step 1: Classify each review as positive, negative, or neutral.

Step 2: From the negative reviews, pull out repeating keywords.

Step 3: Group those keywords into the top 3 complaint themes.

Step 4: For each theme, propose a concrete improvement."When you spell out the steps, AI is less likely to skip ahead or miss the point. It is the same reason a complex recipe fails less often when you follow it in order.

Performance Description sets the tone and style of AI’s behavior during the work. It is not about what AI produces but about how AI engages with you.

| Performance instruction | Likely AI behavior |

|---|---|

| “Be blunt about the weaknesses. Skip the compliments.” | Direct critique with concrete fix suggestions |

| “Be encouraging while pointing out gaps.” | Strengths first, then gentle improvement areas |

| “Act like a Socratic tutor. Lead me with questions.” | No direct answers — questions that prompt thinking |

Performance description matters most when you are using AI to learn. Asking AI to give you the answer is very different from asking it to lead you toward the answer, and the learning outcomes differ accordingly.

Six practical techniques for effective description

Once the three dimensions of description make sense, the next question is how to apply them in actual prompts. The following techniques are the working toolkit.

1. Provide context. Background information sharpens results. Tell AI what you want, but also why and in what situation:

[No context]

"Tell me about customer churn."

[With context]

"I run a B2B SaaS product. Over the past three months,

monthly churn rose from 2% to 5%. Most of the lost

customers fail to convert from the free trial. Suggest

three strategies to reduce churn in this situation."2. Show an example of what you want. A single concrete example often communicates more than a paragraph of explanation:

"Translate technical sentences into plain language,

following this example:

Before: 'The module operates on an asynchronous event-loop basis.'

After: 'This component can handle several tasks at once.'

Now rewrite this sentence the same way:

'A RESTful API uses HTTP methods to manage resource state.'"3. Specify output conditions. Pin down format, length, and structure to leave less room for AI to guess:

"Write to these conditions:

- Format: Numbered list

- Each item: A one-line title + 2–3 sentences of explanation

- Total items: 5

- Tone: Friendly but professional blog voice"4. Break complex work into steps. A single prompt that contains too much asks AI to juggle too many things at once. Splitting the work into clear stages improves both completeness and quality.

5. Ask AI to think first. Instead of demanding an immediate answer, ask AI to reason through the options before concluding. This works especially well for judgment calls:

"Before recommending which of these two tech stacks to choose,

first analyze the pros and cons of each in our specific

situation. Then make your final recommendation."6. Define a role and tone. Assigning AI a perspective changes the depth and angle of the response:

"Review this survey draft from the perspective of a UX

researcher with 10 years of experience. Focus on whether

any questions might cause response bias and whether the

question order is appropriate."One bonus technique is worth its own line: ask AI to improve your prompt. Tell AI what you are trying to accomplish, share your current prompt, and ask what is missing or unclear. AI will often surface gaps you would not have noticed.

There is no single correct way to write a prompt. The one consistent pattern, though, is that AI collaboration improves through iteration. Expecting the first attempt to be perfect is the wrong mental model. The realistic strategy is to run a fast loop: see the output, identify the gap, revise the request.

Discernment: Critically Evaluating AI Output

Discernment is the capability to critically evaluate the output AI produces, the process it used, and the way it behaved during the work.

If description is “communicating clearly to AI,” discernment is “judging clearly what AI returned.” They are two sides of the same conversation. Either one alone is incomplete.

Discernment matters because AI generates output in a smooth, confident tone regardless of whether the content is correct. Without active verification, smooth wrong answers slip through as easily as smooth right ones. This is the structural risk that makes discernment essential. Discernment has three dimensions.

Product Discernment

Product discernment evaluates the final output. Quality criteria include accuracy, appropriateness, completeness, and consistency. Useful checks:

- Accuracy: Are the facts right? Dates, numbers, proper nouns?

- Appropriateness: Does it fit the context I described?

- Completeness: Is anything missing? Are key perspectives absent?

- Consistency: Do earlier and later parts contradict each other?

Watch especially for hallucination — AI stating things that do not exist with full confidence. Catching hallucinations is one of the core jobs of product discernment.

Process Discernment

Process discernment evaluates how AI arrived at its conclusion. A plausible-sounding answer can still rest on a broken chain of reasoning. Suppose you ask AI, “Should we raise our prices?” and it answers, “Yes, you should.” The conclusion is clean, but the reasoning may have problems:

- Competitor pricing was considered, but customer price sensitivity data was ignored.

- The argument was “below industry average, so raise it,” without weighing whether your product is intentionally positioned as low-cost.

- Only the short-term effect of a price increase was analyzed, not the long-term churn impact.

Process discernment tracks not the answer but the path to the answer.

Performance Discernment

Performance Discernment evaluates how AI behaved during the collaboration:

- Did AI grasp the real intent of my question, or only the surface meaning?

- When I gave feedback, did AI actually incorporate it, or repeat the same pattern?

- Is AI’s level of explanation right for me — not too basic, not too technical?

It builds across multiple interactions rather than in a single exchange. Noticing patterns like “this AI weakens on this kind of request” or “this AI integrates feedback well” is performance discernment in practice.

Discernment is closer to “verify” than to “doubt.” An experienced editor reviewing a reporter’s draft is not assuming the reporter is wrong — they are taking responsibility for the final product. Reviewing AI output is the same. The habit of checking once more before publishing in your own name is where discernment begins.

Diligence: Taking Responsibility for AI Collaboration

Diligence is the capability to act responsibly across the process and outputs of working with AI.

While delegation, description, and discernment focus on making AI use effective and efficient, diligence is the competency that keeps it ethical and safe. As AI use spreads, questions like “Who is responsible for AI-generated work?” and “Should I disclose that I used AI?” get harder to defer. Diligence is the capability that lets you answer them thoughtfully. It has three dimensions.

Creation Diligence

Creation diligence is choosing AI systems and interaction patterns deliberately. Not every AI tool has the same security and privacy posture. Whether your inputs are used for training, shared with third parties, or stored — and for how long — varies tool by tool.

Checks under creation diligence:

- Data handling: How is my input processed and stored?

- Sensitive information: Is it safe to put customer data or business confidentials into this AI?

- Tool fit: Is this AI the appropriate choice for this task?

If you need to analyze unreleased financial data, a private solution running in your company’s environment is the diligent choice over a free AI tool that sends data to external servers.

Transparency Diligence

Transparency diligence is disclosing AI’s role honestly to the people affected by the work. This is tricky because how much to disclose varies by context.

| Context | Transparency expectation | Example |

|---|---|---|

| Academic and educational | High | Stating in a paper or assignment which parts AI helped with |

| Business | Medium to high | Letting the team know that AI drafted the first version of a client document |

| Personal projects | Discretionary | Choosing whether to note AI assistance in a personal blog post |

The core question is: “Does the person receiving this work need to know AI was involved?” If yes, find an appropriate way to disclose it.

Deployment Diligence

Deployment diligence is taking final responsibility for the work you ship after AI helped produce it. Whether AI wrote the draft, suggested the idea, or ran the analysis, the person who decided to use the output is the one accountable for it. “The AI told me so” is not a defense when something goes wrong.

Checks under deployment diligence:

- Did I directly verify the facts in this output?

- Does the content infringe anyone’s copyright?

- Does the work contain unintentional bias?

- Am I confident enough to attach my name to this?

Diligence rests on a simple recognition: even when you work with AI, final responsibility is yours. An architect using CAD software is not less responsible for the safety of the building. The software is a tool. The signature on the drawing belongs to the architect. AI is a powerful tool. The signature still belongs to the person.

How the 4Ds Connect: A Real-World Scenario

The 4D Framework looks linear when described one capability at a time, but in practice the four capabilities run together and reinforce each other. A short scenario makes this concrete.

Scenario: Drafting a quarterly business report

A startup’s business development lead needs to prepare a quarterly performance report for investors. Here is how the 4Ds apply.

| 4D Stage | Application |

|---|---|

| Delegation | First, list the tasks involved: gathering data, calculating KPIs, summarizing market trends, drafting the report, building visuals. Decide that market summaries and the report draft go to AI. KPI calculation and key message decisions stay with the human. Sensitive financial numbers are kept out of AI entirely. |

| Description | Tell AI the deliverable: “Audience is investors, length is three pages, tone is data-driven and concise.” Add process guidance: “Cover market trends first, then connect them to our performance.” Add a performance instruction: “Center the story on growth rate and unit economics, since those are what investors will look at.” |

| Discernment | Read AI’s draft. Use product discernment to verify market data accuracy. Use process discernment to check the logic connecting market trends to performance. Notice that some figures rely on outdated sources and request a revision for those sections. |

| Diligence | Disclose to the team that market analysis sections were drafted with AI assistance. Personally verify every number and claim in the final report. Send it to investors under your own name. |

Each capability supports the next. Bad delegation throws off description from the start. Weak discernment undermines the basis for diligence. The 4Ds are not a checklist to walk through once — they are a loop that keeps reinforcing the quality of the collaboration.

Conclusion

AI fluency is not a synonym for prompt engineering or AI literacy. It is the broader practical capability to work with AI systems effectively, efficiently, ethically, and safely across the full collaboration. The 4D Framework — Delegation, Description, Discernment, Diligence — gives that capability a concrete shape anyone can practice and improve.

| Capability | One-line summary |

|---|---|

| Delegation | Strategically split work between AI and yourself. |

| Description | Communicate the deliverable, the approach, and the behavior you want. |

| Discernment | Critically evaluate the output, the reasoning, and the interaction. |

| Diligence | Take responsibility for transparency and the final result. |

AI tools will keep changing fast. New models, new features, new interfaces. What does not change is the underlying skill of deciding what to hand off, communicating clearly, verifying critically, and owning the outcome. Those four capabilities are the durable foundation. The tool changes; fluency carries over.

Leave a Reply